Jafari Navimipour, Nima

Loading...

Profile URL

Name Variants

Jafari Navimipour,Nima

JAFARI NAVIMIPOUR, Nima

N. Jafari Navimipour

Jafari Navimipour, Nima

Jafari Navimipour,N.

J.,Nima

JAFARI NAVIMIPOUR, NIMA

Jafari Navimipour, N.

Nima Jafari Navimipour

Nima JAFARI NAVIMIPOUR

Jafari Navimipour, NIMA

Jafari Navimipour N.

NIMA JAFARI NAVIMIPOUR

J., Nima

Nima, Jafari Navimipour

Navimipour, Nima Jafari

Navimipour, N.J.

Navimpour, Nima Jafari

Navımıpour, Nıma Jafarı

Jafari Navimipour, Nima Jafari

JAFARI NAVIMIPOUR, Nima

N. Jafari Navimipour

Jafari Navimipour, Nima

Jafari Navimipour,N.

J.,Nima

JAFARI NAVIMIPOUR, NIMA

Jafari Navimipour, N.

Nima Jafari Navimipour

Nima JAFARI NAVIMIPOUR

Jafari Navimipour, NIMA

Jafari Navimipour N.

NIMA JAFARI NAVIMIPOUR

J., Nima

Nima, Jafari Navimipour

Navimipour, Nima Jafari

Navimipour, N.J.

Navimpour, Nima Jafari

Navımıpour, Nıma Jafarı

Jafari Navimipour, Nima Jafari

Job Title

Doç. Dr.

Email Address

Main Affiliation

Computer Engineering

Computer Engineering

05. Faculty of Engineering and Natural Sciences

01. Kadir Has University

Computer Engineering

05. Faculty of Engineering and Natural Sciences

01. Kadir Has University

Status

Current Staff

Website

ORCID ID

Scopus Author ID

Turkish CoHE Profile ID

Google Scholar ID

WoS Researcher ID

Sustainable Development Goals

11

SUSTAINABLE CITIES AND COMMUNITIES

9

Research Products

10

REDUCED INEQUALITIES

1

Research Products

9

INDUSTRY, INNOVATION AND INFRASTRUCTURE

18

Research Products

12

RESPONSIBLE CONSUMPTION AND PRODUCTION

5

Research Products

2

ZERO HUNGER

5

Research Products

3

GOOD HEALTH AND WELL-BEING

12

Research Products

13

CLIMATE ACTION

1

Research Products

7

AFFORDABLE AND CLEAN ENERGY

10

Research Products

5

GENDER EQUALITY

0

Research Products

6

CLEAN WATER AND SANITATION

0

Research Products

8

DECENT WORK AND ECONOMIC GROWTH

0

Research Products

16

PEACE, JUSTICE AND STRONG INSTITUTIONS

0

Research Products

4

QUALITY EDUCATION

0

Research Products

15

LIFE ON LAND

0

Research Products

1

NO POVERTY

0

Research Products

14

LIFE BELOW WATER

2

Research Products

17

PARTNERSHIPS FOR THE GOALS

0

Research Products

This researcher does not have a Scopus ID.

This researcher does not have a WoS ID.

Scholarly Output

115

Articles

102

Views / Downloads

48/0

Supervised MSc Theses

3

Supervised PhD Theses

1

WoS Citation Count

3293

Scopus Citation Count

4123

WoS h-index

32

Scopus h-index

34

Patents

0

Projects

0

WoS Citations per Publication

28.63

Scopus Citations per Publication

35.85

Open Access Source

30

Supervised Theses

4

| Journal | Count |

|---|---|

| Nano Communication Networks | 6 |

| Sustainable Computing-Informatics & Systems | 5 |

| Cluster Computing | 5 |

| Multimedia Tools and Applications | 4 |

| International Journal of Communication Systems | 4 |

Current Page: 1 / 14

Scopus Quartile Distribution

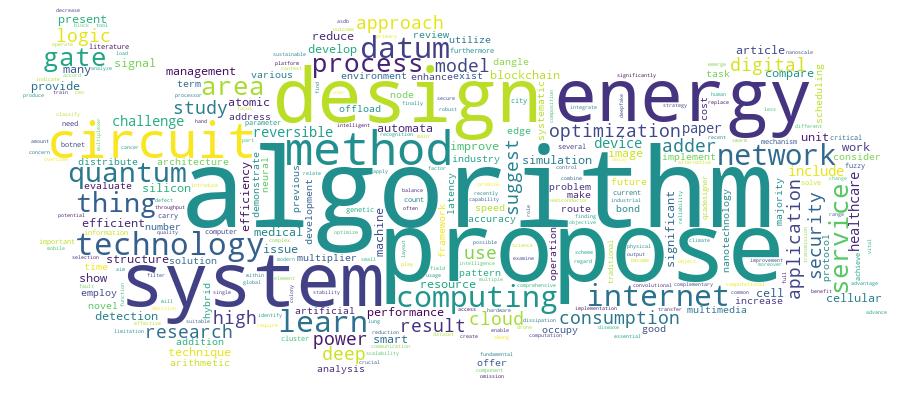

Competency Cloud

115 results

Scholarly Output Search Results

Now showing 1 - 10 of 115

Article Citation - WoS: 13Citation - Scopus: 12Leveraging Explainable Artificial Intelligence for Transparent and Trustworthy Cancer Detection Systems(Elsevier, 2025) Toumaj, Shiva; Heidari, Arash; Navimipour, Nima Jafari; Jafari Navimipour, NimaTimely detection of cancer is essential for enhancing patient outcomes. Artificial Intelligence (AI), especially Deep Learning (DL), demonstrates significant potential in cancer diagnostics; however, its opaque nature presents notable concerns. Explainable AI (XAI) mitigates these issues by improving transparency and interpretability. This study provides a systematic review of recent applications of XAI in cancer detection, categorizing the techniques according to cancer type, including breast, skin, lung, colorectal, brain, and others. It emphasizes interpretability methods, dataset utilization, simulation environments, and security considerations. The results indicate that Convolutional Neural Networks (CNNs) account for 31 % of model usage, SHAP is the predominant interpretability framework at 44.4 %, and Python is the leading programming language at 32.1 %. Only 7.4 % of studies address security issues. This study identifies significant challenges and gaps, guiding future research in trustworthy and interpretable AI within oncology.Article Citation - WoS: 20Citation - Scopus: 16A New a Flow-Based Approach for Enhancing Botnet Detection Using Convolutional Neural Network and Long Short-Term Memory(Springer London Ltd, 2025) Asadi, Mehdi; Heidari, Arash; Navimipour, Nima Jafari; Jafari Navimipour, NimaDespite the growing research and development of botnet detection tools, an ever-increasing spread of botnets and their victims is being witnessed. Due to the frequent adaptation of botnets to evolving responses offered by host-based and network-based detection mechanisms, traditional methods are found to lack adequate defense against botnet threats. In this regard, the suggestion is made to employ flow-based detection methods and conduct behavioral analysis of network traffic. To enhance the performance of these approaches, this paper proposes utilizing a hybrid deep learning method that combines convolutional neural network (CNN) and long short-term memory (LSTM) methods. CNN efficiently extracts spatial features from network traffic, such as patterns in flow characteristics, while LSTM captures temporal dependencies critical to detecting sequential patterns in botnet behaviors. Experimental results reveal the effectiveness of the proposed CNN-LSTM method in classifying botnet traffic. In comparison with the results obtained by the leading method on the identical dataset, the proposed approach showcased noteworthy enhancements, including a 0.61% increase in precision, a 0.03% augmentation in accuracy, a 0.42% enhancement in the recall, a 0.51% improvement in the F1-score, and a 0.10% reduction in the false-positive rate. Moreover, the utilization of the CNN-LSTM framework exhibited robust overall performance and notable expeditiousness in the realm of botnet traffic identification. Additionally, we conducted an evaluation concerning the impact of three widely recognized adversarial attacks on the Information Security Centre of Excellence dataset and the Information Security and Object Technology dataset. The findings underscored the proposed method's propensity for delivering a promising performance in the face of these adversarial challenges.Article Citation - WoS: 4Citation - Scopus: 8Quantum-based serial-parallel multiplier circuit using an efficient nano-scale serial adder(Soc Microelectronics, Electron Components Materials-midem, 2024) Wu, Hongyu; Jiang, Shuai; Seyedi, Saeid; Navimipour, Nima JafariQuantum dot cellular automata (QCA) is one of the newest nanotechnologies. The conventional complementary metal oxide semiconductor (CMOS) technology was superbly replaced by QCA technology. This method uses logic states to identify the positions of individual electrons rather than defining voltage levels. A wide range of optimization factors, including reduced power consumption, quick transitions, and an extraordinarily dense structure, are covered by QCA technology. On the other hand, the serialparallel multiplier (SPM) circuit is an important circuit by itself, and it is also very important in the design of larger circuits. This paper defines an optimized circuit of SPM circuit using QCA. It can integrate serial and parallel processing benefits altogether to increase efficiency and decrease computation time. Thus, all these mentioned advantages make this multiplier framework a crucial element in numerous applications, including complex arithmetic computations and signal processing. This research presents a new QCAbased SPM circuit to optimize the multiplier circuit's performance and enhance the overall design. The proposed framework is an amalgamation of highly performance architecture with efficient path planning. Other than that, the proposed QCA-based SPM circuit is based on the majority gate and 1-bit serial adder (BSA). BCA circuit has 34 cells and a 0.04 mu m2 area and uses 0.5 clock cycles. The outcomes showed the suggested QCA-based SPM circuit occupies a mere 0.28 mu m 2 area, requires 222 QCA cells, and demonstrates a latency of 1.25 clock cycles. This work contributes to the existing literature on QCA technology, also emphasizing its capabilities in advancing VLSI circuit layout via optimized performance.Article Citation - WoS: 1Citation - Scopus: 1Scalable and Low-Power Reversible Logic for Future Devices: QCA and IBM-Based Gate Realization(Elsevier, 2025) Ahmadpour, Seyed-Sajad; Navimipour, Nima Jafari; Zohaib, Muhammad; Misra, Neeraj Kumar; Pour, Mahsa Rastegar; Rasmi, Hadi; Das, Jadav Chandra; Chandra Das, JadavOne such revolutionary approach to changing the nano-electronic landscape is integrating reversible logic with quantum dot technology that will replace the conventional complementary metal-oxide semiconductors (CMOS) circuits for ultra-high speed, low density, and energy-efficient digital designs. The implementation of the reversible structure under the most inflexible conditions, as executed by quantum laws, is a highly challenging task. Furthermore, the enormous occupying areas seriously compromise the accuracy of the output in quantum dot circuits. Because of this challenge, quantum circuits can be employed as fundamental building blocks in highperformance digital systems since their implementation has a key impact on overall system performance. This study discusses a paradigm shift in nanoscale digital design by using a 4 x 4 reversible gate that redefines the basis of efficiency and precision. This reversible gate is elaborately used in a reversible full-adder circuit, fully symbolizing the core of minimum area, ultra-low energy consumption, and perfect output accuracy. The proposed reversible circuits have been fully realized using quantum-dot cellular automata technology (QCA), simulated, and verified by the highly reliable tool such as Qiskit IBM and QCADesigner 2.0.3. Furthermore, simulations results demonstrated the superiority of the QCA-based proposed adder, which reduced occupied area by 7.14 %, and cell count by 11.57 %, respectively. This work resolves some problems and opens new boundaries toward the future of digital circuits by addressing the main challenges of stability and pushing the boundaries of reversible logic design.Book Part Citation - Scopus: 2Machine/Deep Learning Techniques for Multimedia Security(inst Engineering Tech-iet, 2023) Heidari, Arash; Navimipour, Nima Jafari; Azad, Poupak; Heidar, ArashMultimedia security based on Machine Learning (ML)/ Deep Learning (DL) is a field of study that focuses on using ML/DL techniques to protect multimedia data such as images, videos, and audio from unauthorized access, manipulation, or theft. Developing and implementing algorithms and systems that use ML/DL techniques to detect and prevent security breaches in multimedia data is the main subject of this field. These systems use techniques like watermarking, encryption, and digital signature verification to protect multimedia data. The advantages of using ML/DL in multimedia security include improved accuracy, scalability, and automation. ML/DL algorithms can improve the accuracy of detecting security threats and help identify multimedia data vulnerabilities. Additionally, ML models can be scaled up to handle large amounts of multimedia data, making them helpful in protecting big datasets. Finally, ML/DL algorithms can automate the process of multimedia security, making it easier and more efficient to protect multimedia data. The disadvantages of using ML/DL in multimedia security include data availability, complexity, and black box models. ML and DL algorithms require large amounts of data to train the models, which can sometimes be challenging. Developing and implementing ML algorithms can also be complex, requiring specialized skills and knowledge. Finally, ML/DL models are often black box models, which means it can be difficult to understand how they make their decisions. This can be a challenge when explaining the decisions to stakeholders or auditors. Overall, multimedia security based on ML/DL is a promising area of research with many potential benefits. However, it also presents challenges that must be addressed to ensure the security and privacy of multimedia data.Review Citation - WoS: 51Citation - Scopus: 66Resilient and Dependability Management in Distributed Environments: a Systematic and Comprehensive Literature Review(Springer, 2023) Amiri, Zahra; Heidari, Arash; Navimipour, Nima Jafari; Unal, MehmetWith the galloping progress of the Internet of Things (IoT) and related technologies in multiple facets of science, distribution environments, namely cloud, edge, fog, Internet of Drones (IoD), and Internet of Vehicles (IoV), carry special attention due to their providing a resilient infrastructure in which users can be sure of a secure connection among smart devices in the network. By considering particular parameters which overshadow the resiliency in distributed environments, we found several gaps in the investigated review papers that did not comprehensively touch on significantly related topics as we did. So, based on the resilient and dependable management approaches, we put forward a beneficial evaluation in this regard. As a novel taxonomy of distributed environments, we presented a well-organized classification of distributed systems. At the terminal stage, we selected 37 papers in the research process. We classified our categories into seven divisions and separately investigated each one their main ideas, advantages, challenges, and strategies, checking whether they involved security issues or not, simulation environments, datasets, and their environments to draw a cohesive taxonomy of reliable methods in terms of qualitative in distributed computing environments. This well-performed comparison enables us to evaluate all papers comprehensively and analyze their advantages and drawbacks. The SLR review indicated that security, latency, and fault tolerance are the most frequent parameters utilized in studied papers that show they play pivotal roles in the resiliency management of distributed environments. Most of the articles reviewed were published in 2020 and 2021. Besides, we proposed several future works based on existing deficiencies that can be considered for further studies.Article Citation - WoS: 89Citation - Scopus: 120A new lung cancer detection method based on the chest CT images using Federated Learning and blockchain systems(Elsevier, 2023) Heidari, Arash; Javaheri, Danial; Toumaj, Shiva; Navimipour, Nima Jafari; Rezaei, Mahsa; Unal, MehmetWith an estimated five million fatal cases each year, lung cancer is one of the significant causes of death worldwide. Lung diseases can be diagnosed with a Computed Tomography (CT) scan. The scarcity and trustworthiness of human eyes is the fundamental issue in diagnosing lung cancer patients. The main goal of this study is to detect malignant lung nodules in a CT scan of the lungs and categorize lung cancer according to severity. In this work, cutting-edge Deep Learning (DL) algorithms were used to detect the location of cancerous nodules. Also, the real-life issue is sharing data with hospitals around the world while bearing in mind the organizations' privacy issues. Besides, the main problems for training a global DL model are creating a collaborative model and maintaining privacy. This study presented an approach that takes a modest amount of data from multiple hospitals and uses blockchain-based Federated Learning (FL) to train a global DL model. The data were authenticated using blockchain technology, and FL trained the model internationally while maintaining the organization's anonymity. First, we presented a data normalization approach that addresses the variability of data obtained from various institutions using various CT scanners. Furthermore, using a CapsNets method, we classified lung cancer patients in local mode. Finally, we devised a way to train a global model cooperatively utilizing blockchain technology and FL while maintaining anonymity. We also gathered data from real-life lung cancer patients for testing purposes. The suggested method was trained and tested on the Cancer Imaging Archive (CIA) dataset, Kaggle Data Science Bowl (KDSB), LUNA 16, and the local dataset. Finally, we performed extensive experiments with Python and its well-known libraries, such as Scikit-Learn and TensorFlow, to evaluate the suggested method. The findings showed that the method effectively detects lung cancer patients. The technique delivered 99.69 % accuracy with the smallest possible categorization error.Doctoral Thesis Kuantum Noktalarına Dayalı Enerji Verimli Elektronik Cihazlar için Nano Ölçekli Aritmetik ve Mantık Birimi Tasarımı(2025) Zohaib, Muhammad; Navimipour, Nima Jafari; Aydemir, Mehmet TimurElektronik, modern teknolojilerin temel bileşenidir ve transistörler, diyotlar, kapasitörler ve sensörler gibi basit bileşenlerin yardımıyla elektriksel bilginin iletilmesini sağlar. Akımı kontrol ederek, temel sinyal işleme fonksiyonları olan amplifikasyon, anahtarlama ve modülasyon gibi önemli işlevleri yerine getirirler. Mevcut yüksek performanslı sinyal işleme uygulamaları, bu sistemleri daha hızlı, daha küçük ve daha az enerji tüketen hale getiren malzeme bilimi ve nanoteknolojideki güncel gelişmeler sayesinde mümkün olmaktadır. Sinyal işleme, modern yaşamın birçok unsurunun telekomünikasyon, eğitim, sağlık, endüstri ve güvenlik gibi gelişiminde önemli bir etki yaratmıştır. Yarı iletken endüstrisi, sinyal işleme inovasyonunun başlıca itici gücü olup, küresel talebe yanıt olarak giderek daha sofistike elektronik cihazlar ve devreler üretmektedir. Ayrıca, merkezi işlem birimi (CPU), bilgisayarların ve tüm elektronik cihazların ve sinyal işlemenin 'beyni' olarak tanımlanır. CPU, bellek, çarpan, toplayıcı gibi hayati bileşenleri içeren kritik bir elektronik aygıttır. CPU'nun temel bileşenlerinden biri de aritmetik ve mantık birimidir (ALU); toplama, çarpma ve çıkarma gibi tüm CPU işlemleri içinde aritmetik ve mantıksal işlemleri gerçekleştirmektedir. Ancak ALU devrelerinde gecikme, kapladığı alan ve enerji tüketimi önemli parametrelerdir. Mevcut ALU tasarımları yüksek gecikme, fazla alan kullanımı ve yüksek enerji tüketimi gibi sorunlarla karşılaştığı için, yeni teknolojiye dayalı elektronik devrelerin uygulanması; mikrodenetleyiciler, mikroişlemciler ve baskılı cihazlar gibi tüm sinyal işleme aygıtlarının performansını yüksek hız ve düşük alan kullanımı ile önemli ölçüde artırabilir. Kuantum Nokta Hücreli Otomatlar (QCA), bu eksiklikleri gidermek için tüm elektronik devreler ve sinyal işleme uygulamalarında etkili bir teknolojidir. Bu teknoloji, CMOS ve VLSI gibi yerleşik teknolojilere alternatif olarak araştırılmakta olup, ultra düşük güç tüketimi, yüksek cihaz yoğunluğu, THz seviyesinde hızlı çalışma hızı ve azaltılmış devre karmaşıklığı gibi avantajlara sahiptir. Bu araştırma, gelişmiş QCA nanoteknolojisini uygulayarak mikrodenetleyiciler gibi elektronik cihazları geliştiren yenilikçi bir ALU tasarımı önermektedir. Temel amaç, QCA nanoteknolojisinin potansiyelinden tam anlamıyla yararlanan özgün bir ALU mimarisi sunmaktır. Yeni ve verimli bir yaklaşımla, temel mantık kapıları döndürülmemiş tek hücreye dayalı eş düzlemli bir düzenle ustalıkla kullanılmaktadır. Ayrıca bu çalışma, kuantum nokta hücreli otomata teknolojisinde geliştirilmiş 1-bit ve 2-bit aritmetik ve mantık birimi sunmaktadır. Önerilen tasarım; mantık işlemleri, aritmetik işlemler, tam toplayıcı (FA) tasarımı ve çoklayıcıları içermektedir. Tüm önerilen tasarımlar güçlü simülasyon aracı QCADesigner kullanılarak değerlendirilmiş ve doğrulanmıştır. Simülasyon sonuçları, önerilen ALU'nun hücre sayısı ve toplam kaplanan alan açısından en iyi tek katmanlı ve çok katmanlı önceki tasarımlara kıyasla sırasıyla %42.48 ve %64.28 oranında iyileştirme sağladığını göstermektedir.Article Citation - WoS: 19Citation - Scopus: 20A Nano-Scale Design of Arithmetic and Logic Unit for Energy-Efficient Signal Processing Devices Based on a Quantum-Based Technology(Springer, 2025) Zohaib, Muhammad; Navimipour, Nima Jafari; Aydemir, Mehmet Timur; Ahmadpour, Seyed-SajadSignal processing had a significant impact on the development of many elements of modern life, including telecommunications, education, healthcare, industry, and security. The semiconductor industry is the primary driver of signal processing innovation, producing ever-more sophisticated electronic devices and circuits in response to global demand. In addition, the central processing unit (CPU) is described as the "brain" of a computer or all electronic devices and signal processing. CPU is a critical electronic device that includes vital components such as memory, multiplier, adder, etc. Also, one of the essential components of the CPU is the arithmetic and logic unit (ALU), which executes the arithmetic and logical operations within all types of CPU operations, such as addition, multiplication, and subtraction. However, delay, occupied areas, and energy consumption are essential parameters in ALU circuits. Since the recent ALU designs experienced problems like high delay, high occupied area, and high energy consumption, implementing electronic circuits based on new technology can significantly boost the performance of entire signal processing devices, including microcontrollers, microprocessors, and printed devices, with high-speed and low occupied space. Quantum dot cellular automata (QCA) is an effective technology for implementing all electronic circuits and signal processing applications to solve these shortcomings. It is a transistor-less nanotechnology being explored as a successor to established technologies like CMOS and VLSI due to its ultra-low power dissipation, high device density, fast operating speed in THz, and reduced circuit complexity. This research proposes a ground-breaking ALU that upgrades electrical devices such as microcontrollers by applying cutting-edge QCA nanotechnology. The primary goal is to offer a novel ALU architecture that fully utilizes the potential of QCA nanotechnology. Using a new and efficient approach, the fundamental gates are skillfully utilized with a coplanar layout based on a single cell not rotated. Furthermore, this work presents an enhanced 1-bit and 2-bit arithmetic logic unit in quantum dot cellular automata. The recommended design includes logic, arithmetic operations, full adder (FA) design, and multiplexers. Using the powerful simulation tools QCADesigner, all proposed designs are evaluated and verified. The simulation outcomes indicates that the suggested ALU has 42.48 and 64.28% improvements concerning cell count and total occupied area in comparison to the best earlier single-layer and multi-layer designs.Article Citation - WoS: 1A Hybrid Deep Learning Framework Using Synthetic Oversampling, Autoencoder, Convolutional Neural Networks, and an Attention Mechanism for Credit Card Fraud Detection(Springer Nature, 2025) Kiaei, Ali Akbar; Navimipour, Nima Jafari; Pour, Narges Mohammadali; Heidari, Arash; Zavvar, Mohammad; Jafari, MojtabaCredit card fraud is still a big problem for banks and other financial organizations throughout the globe. It hurts consumer confidence and financial stability. Despite significant progress in fraud detection, existing algorithms struggle with highly imbalanced datasets dominated by legitimate transactions. This article addresses this issue by proposing by suggesting a new way to solve it that combines the Synthetic Minority Oversampling Method (SMOTE), autoencoder, Convolutional Neural Networks (CNNs), and attention mechanism into one framework (SMOTE-AE-CNN-Att). The technique starts by utilizing SMOTE to balance the dataset, then uses AE-CNN-Att models to extract features, and then uses classic Machine Learning (ML) methods to classify the data. The suggested method has been shown to be very accurate (> 99.9%) in finding faket transactions while keeping important performance metrics like precision (up to 90.07%), recall (up to 91.13%), and F1-score (up to 90.60%). When compared to other techniques, the SMOTE-AE-CNN-Att model does a better job of finding a good balance between accuracy and recall, which is very important for finding fraud. This research shows how Deep Learning (DL) methods might make it much easier to detect fraud in credit card transactions. This would lead to better security and consumer protection in financial transactions.